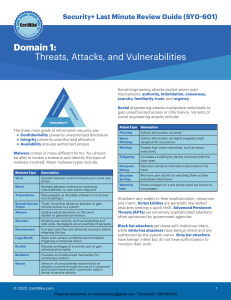

CompTIA Security+ All-in-One Exam Guide, Sixth Edition (Exam SY0-601)) (Wm. Arthur Conklin, Greg White, Dwayne Williams etc.) (z-lib.org)

Anuncio