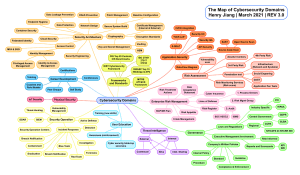

CC Certified in Cybersecurity All-in-One Exam Guide (Steven Bennett) (Z-Library)

Anuncio